- Prerequisites

- What is Llama 3?

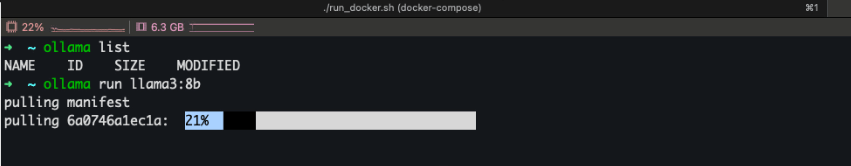

- Step 1: Run the Llama 3 Model

- Step 2: Chat With Llama 3

- Step 3: Use Llama 3 With Ollama API+

- Step 4: Use Llama 3 With Python

- Frequently Asked Questions

- Conclusion

- References

- Related YouTube Videos

Llama 3, developed by Meta AI, is one of the most powerful open source large language models available today. It can generate text, assist with programming, answer questions, summarize information, and help with research tasks. Instead of relying on cloud services like ChatGPT or other paid APIs, developers can now run Llama 3 locally on their own machines using tools like Ollama.

In this guide, we will learn how to run Llama 3 locally using Ollama, step by step. By the end of this tutorial, you will have a ChatGPT like AI assistant running directly on your computer without API costs or internet dependency.

Running Llama 3 locally provides several advantages such as better privacy, unlimited usage, and full control over your AI workflows, making it an excellent option for developers, researchers, and AI enthusiasts.

Prerequisites

Before running Llama 3 locally using Ollama, make sure the following dependencies are already available on your system.

Ollama should be installed

System Requirements

Running large language models requires sufficient system resources.

Minimum Requirements

- 8 GB RAM

- Modern CPU

- 10 GB free disk space

- Internet connection (only required for first model download)

Recommended Setup

For smoother performance:

- 16 GB RAM

- SSD storage

- GPU support (optional but helpful)

Once the model is downloaded, it can run fully offline.

What is Llama 3?

Llama 3 is a large language model created by Meta AI. It is designed to understand and generate natural language, making it useful for many AI powered tasks.

Common use cases include:

- AI chatbots

- coding assistants

- content generation

- research assistance

- document summarization

When combined with Ollama, Llama 3 becomes extremely easy to run locally without complicated setup.

Step 1: Run the Llama 3 Model

Once Ollama is installed, running the model is extremely simple. Open your terminal and run

ollama run llama3:8bWhen you run this command for the first time:

- Ollama downloads the Llama 3:8B model files

- The model is stored locally on your machine

- A chat interface starts in the terminal

The download may take a few minutes depending on your internet speed.

The Llama 3:8B model is lightweight enough to run on most modern laptops while still providing strong AI capabilities.

Step 2: Chat With Llama 3

After the model loads, you will see a prompt in your terminal where you can start interacting with the model. Example

>>> Explain artificial intelligence in simple termsLlama 3 will generate a response directly on your computer. You now have your own AI assistant running locally.

Step 3: Use Llama 3 With Ollama API+

Ollama also exposes a local API so developers can integrate Llama 3 into applications. The Ollama server runs locally on

http://localhost:11434Example API request

curl http://localhost:11434/api/generate -d '{

"model": "llama3:8b",

"prompt": "Explain machine learning"

}'This API allows you to build applications such as:

- AI chatbots

- developer assistants

- automation tools

- document summarization tools

Step 4: Use Llama 3 With Python

You can also integrate Ollama with Python to build AI applications. Example:

import requests

response = requests.post(

"http://localhost:11434/api/generate",

json={

"model": "llama3:8b",

"prompt": "Write a short story about AI"

}

)

print(response.json())This approach is useful for building:

- AI powered applications

- chatbots

- automation tools

- developer tools

Frequently Asked Questions

Can Llama 3 run locally?

Yes. Llama 3 can run locally using tools like Ollama. Once downloaded, the model can run completely offline.

Is Ollama free?

Yes. Ollama is free and allows users to run multiple open source AI models locally.

How much RAM is required for Llama 3?

The minimum requirement is 8 GB RAM, but 16 GB RAM is recommended for better performance.

Can Llama 3 run without GPU?

Yes. Llama 3 can run on CPU, but performance improves significantly when using GPU acceleration.

Conclusion

Running Llama 3 locally using Ollama is one of the easiest ways to experiment with modern AI models. With just a single command, you can run a powerful AI assistant directly on your computer and start building your own AI applications. For developers and AI enthusiasts, learning to run models locally opens the door to building private AI tools, automation systems, and powerful applications without relying on external APIs.